# mindzie Documentation - Complete Corpus

> Complete documentation for the mindzie platform. Includes mindzieStudio, mindzieDataDesigner, BPM Studio, and mindzieAPI in a single file.

Generated dynamically from the current documentation set.

# Product: mindzieStudio

---

## Overview

Section: Filters

URL: https://docs.mindziestudio.com/mindzie_studio/filters/overview

Source: /docs-master/mindzieStudio/filters/overview/page.md

# Filters

Filters

Filters allow you to control which cases and events are included in your analysis.

Choose from various filter types to focus on specific aspects of your process data.

There are 37 filters available for you to choose from. Browse them all below.

★

Recommended Filters

These filters are most frequently used by our users and provide the greatest value for process analysis.

Start here for the most effective filtering of your process data.

---

## Activity Frequency

Section: Filters

URL: https://docs.mindziestudio.com/mindzie_studio/filters/activity-frequency

Source: /docs-master/mindzieStudio/filters/activity-frequency/page.md

# Activity Frequency

## Overview

The Activity Frequency filter removes activities that appear too rarely or too frequently across your process cases. Unlike case-level filters that remove entire cases, this event-level filter analyzes how often each activity appears across all cases and removes individual events for activities that fall outside your specified frequency range. This helps you focus on activities that matter most by removing noise from rare exceptions or filtering out overly common activities that don't provide analytical value.

The filter calculates the percentage of cases that contain each activity, then removes all events for activities whose frequency falls outside the minimum and maximum thresholds you specify. This is particularly useful for simplifying process maps, focusing analysis on meaningful activities, and removing data quality issues caused by rare or unusual events.

## Common Uses

- **Process Simplification**: Remove very rare activities that clutter process maps and make analysis difficult.

- **Noise Reduction**: Filter out exceptional activities that appear in less than 5% of cases to focus on standard process flows.

- **Core Process Analysis**: Analyze only the most common activities by filtering to activities that appear in 80% or more of cases.

- **Middle-Range Focus**: Examine activities with moderate frequency (e.g., 20-80%) to identify optional steps or exceptions.

- **Data Quality Assessment**: Identify and remove activities with unusual frequency patterns that may indicate data quality issues.

- **Process Map Clarity**: Create cleaner process maps by removing both very rare and very common activities that don't add analytical value.

## Settings

**Minimum Percent**: The minimum percentage of cases (0.0 to 1.0) an activity must appear in to be included. Activities appearing in fewer cases will be filtered out. For example, 0.2 means the activity must appear in at least 20% of cases.

**Maximum Percent**: The maximum percentage of cases (0.0 to 1.0) an activity can appear in to be included. Activities appearing in more cases will be filtered out. For example, 0.8 means the activity must appear in no more than 80% of cases.

> **Note**: The filter uses inclusive range checking, so activities with frequencies exactly equal to the minimum or maximum percentages will be included. Both minimum and maximum must be values between 0.0 (0%) and 1.0 (100%).

## Examples

### Example 1: Removing Rare Exception Activities

**Scenario**: Your purchase order process contains many rare exception activities that clutter your process map. You want to focus on standard activities that appear in at least 10% of cases.

**Settings**:

- Minimum Percent: 0.1

- Maximum Percent: 1.0

**Result**: All activities that appear in fewer than 10% of cases are removed. For example, if "Emergency Approval" only appears in 5% of cases, all events with that activity are filtered out.

**Insights**: This creates a cleaner view of your standard process flow by removing rare exceptions like emergency procedures, special escalations, or unusual corrections. You can then analyze these exceptional cases separately if needed.

### Example 2: Focusing on Core Process Activities

**Scenario**: You want to analyze only the core activities that occur in nearly all cases, filtering out optional or conditional steps.

**Settings**:

- Minimum Percent: 0.8

- Maximum Percent: 1.0

**Result**: Only activities that appear in 80% or more of cases are retained. Activities like "Create Order" (100% of cases), "Approve Order" (95% of cases), and "Ship Order" (85% of cases) are kept, while optional activities like "Apply Discount" (40% of cases) are removed.

**Insights**: This reveals your mandatory process steps and standard path, helping you understand the core workflow that most cases follow. Deviations from this core can be analyzed separately.

### Example 3: Analyzing Mid-Frequency Activities

**Scenario**: You want to focus on activities that appear in a moderate number of cases (20-80%) to understand optional process steps and common variations.

**Settings**:

- Minimum Percent: 0.2

- Maximum Percent: 0.8

**Result**: Very rare activities (under 20%) and very common activities (over 80%) are removed, leaving only mid-frequency activities.

**Insights**: This helps identify:

- Optional process steps that are frequently but not universally used

- Common process variations that occur in a substantial minority of cases

- Activities that may be candidates for standardization or removal

- Process branches that serve specific customer segments or product types

### Example 4: Removing Ubiquitous Activities

**Scenario**: Your process has some administrative activities that appear in nearly every case but don't provide analytical insights. You want to remove activities that appear in more than 95% of cases.

**Settings**:

- Minimum Percent: 0.0

- Maximum Percent: 0.95

**Result**: Activities that appear in more than 95% of cases are removed. For example, if "System Log Entry" appears in 99% of cases, all those events are filtered out.

**Insights**: This removes activities that occur so frequently they don't help differentiate between cases or process paths. It helps focus on activities that actually indicate process variations or decisions.

### Example 5: Finding Activities at Specific Frequency

**Scenario**: You want to analyze only activities that appear in exactly 50% of cases (plus or minus a small margin) to understand process branching points.

**Settings**:

- Minimum Percent: 0.45

- Maximum Percent: 0.55

**Result**: Only activities that appear in 45-55% of cases are retained. These often represent decision points where the process splits into two roughly equal paths.

**Insights**: These activities typically indicate:

- Binary process decisions (approved/rejected, domestic/international)

- Optional features chosen by approximately half of customers

- Seasonal variations that affect half the year's cases

- Process changes that were implemented mid-period

### Example 6: Comprehensive Noise Reduction

**Scenario**: You want to remove both very rare exceptions (under 5%) and very common administrative activities (over 90%) to focus on meaningful process activities.

**Settings**:

- Minimum Percent: 0.05

- Maximum Percent: 0.9

**Result**: The filter removes rare exception activities and ubiquitous administrative activities, leaving activities that appear in 5-90% of cases.

**Insights**: This creates a balanced view that:

- Excludes rare data quality issues and exceptional cases

- Removes administrative overhead activities

- Retains all meaningful business process activities

- Provides a clear picture for process optimization analysis

## Output

The filter returns a new dataset containing only events for activities whose frequency falls within the specified range. Cases may have fewer events after filtering, but no entire cases are removed unless all their activities fall outside the frequency range.

If you set Minimum Percent to 0.0 and Maximum Percent to 1.0, no filtering occurs and all activities are retained.

The output preserves all event attributes and timestamps for the retained events, maintaining temporal and contextual information for the filtered process data.

## Technical Notes

- **Filter Type**: Event-level filter (removes individual events, which affects cases)

- **Frequency Calculation**: Counts the number of cases containing each activity, not the number of times the activity occurs

- **Performance**: Analyzes all activities and cases, then filters events based on frequency calculation

- **Empty Cases**: Cases may become empty if all their activities are filtered out

- **Percentage Format**: Uses decimal format (0.0 to 1.0) rather than percentage format (0 to 100)

---

*This documentation is part of the mindzieStudio process mining platform.*

---

## Activity More Than

Section: Filters

URL: https://docs.mindziestudio.com/mindzie_studio/filters/activity-more-than

Source: /docs-master/mindzieStudio/filters/activity-more-than/page.md

# Activity More Than

## Overview

The Activity More Than filter selects cases based on how many times a specific activity was performed. This frequency-based case-level filter identifies cases where a particular activity occurred more than a specified number of times, making it ideal for detecting repetitive work patterns, rework loops, or unusual process behaviors.

## Common Uses

- Identify cases with excessive rework or repeated activities

- Find cases where approval loops occurred multiple times

- Detect unusual process patterns with repeated steps

- Analyze cases with multiple review cycles

- Filter for cases requiring repeated customer contacts

- Identify potential process inefficiencies through activity repetition

## Settings

**Activity Value:** Select the activity name to count occurrences of. The dropdown displays all unique activities in your data along with their frequency counts and percentages.

**More Than Count:** Specify the threshold count. The filter returns cases where the selected activity occurred MORE than this number of times. For example, setting this to 1 returns cases where the activity happened 2 or more times.

**Remove Selected Cases:** When enabled, inverts the filter logic to exclude matching cases instead of including them.

## Examples

### Example 1: Finding Rework Cases

**Scenario:** You want to identify cases where the "Review" activity was performed more than once, indicating potential rework or quality issues.

**Settings:**

- Activity Value: "Review"

- More Than Count: 1

- Remove Selected Cases: Unchecked

**Result:**

Cases where "Review" occurred 2 or more times are included. Case #1001 with 3 review activities is included. Case #1002 with 1 review activity is excluded. Case #1003 with 5 review activities is included.

**Insights:** This reveals which cases required multiple review cycles, often indicating quality issues, incomplete submissions, or process bottlenecks. You can analyze what causes cases to need repeated reviews.

### Example 2: Multiple Customer Contacts

**Scenario:** Your customer service process should ideally resolve issues in a single contact. You want to find cases where "Customer Contact" happened more than twice, indicating escalations or unresolved issues.

**Settings:**

- Activity Value: "Customer Contact"

- More Than Count: 2

- Remove Selected Cases: Unchecked

**Result:**

Cases with 3 or more customer contacts are included. This might represent 15% of all cases but consume 40% of customer service resources. These cases warrant investigation for root cause analysis.

**Insights:** Multiple contacts often indicate first-contact resolution failures. Analyzing these cases can reveal training gaps, system issues, or complex case types requiring specialized handling.

### Example 3: Excluding Normal Repetition

**Scenario:** In your manufacturing process, a "Quality Check" activity legitimately occurs at multiple stages. You want to EXCLUDE cases with many quality checks to focus on cases that bypassed normal quality procedures.

**Settings:**

- Activity Value: "Quality Check"

- More Than Count: 2

- Remove Selected Cases: Checked

**Result:**

Cases with 3 or more quality checks are removed. The remaining cases (with 0-2 quality checks) may indicate shortcuts or bypassed quality gates. Case #5001 with 4 quality checks is excluded (normal process). Case #5002 with 1 quality check is kept for investigation.

**Insights:** By inverting the filter, you identify cases that may have bypassed standard quality procedures, potentially leading to quality issues downstream.

### Example 4: Approval Loop Detection

**Scenario:** Your purchase order process should have one approval per level. You want to find cases where "Manager Approval" occurred more than once, indicating rejected and resubmitted requests.

**Settings:**

- Activity Value: "Manager Approval"

- More Than Count: 1

- Remove Selected Cases: Unchecked

**Result:**

Cases requiring multiple manager approvals are identified. Case #7001 with 2 manager approvals had its first request rejected. Case #7002 with 4 manager approvals went through multiple revision cycles.

**Insights:** Multiple approvals often indicate unclear requirements, budget issues, or communication gaps between requesters and approvers. These cases typically have longer cycle times and higher costs.

### Example 5: Threshold-Based Analysis

**Scenario:** You want to identify extreme cases where any activity occurred more than 10 times, regardless of what activity it is. You'll run this filter multiple times with different activities.

**Settings:**

- Activity Value: "Data Entry"

- More Than Count: 10

- Remove Selected Cases: Unchecked

**Result:**

Cases where "Data Entry" happened more than 10 times are flagged as potential data quality issues or system problems. These outlier cases may represent training needs, system errors, or exceptionally complex transactions.

**Insights:** High activity counts can indicate process problems, training issues, or genuinely complex cases requiring special handling procedures.

## Output

This filter operates at the case level based on activity occurrence counts:

- Counts occurrences of the specified activity within each case

- Compares the count against the threshold using greater-than logic

- When "Remove Selected Cases" is unchecked: Returns cases where activity count > threshold

- When "Remove Selected Cases" is checked: Returns cases where activity count <= threshold

- Preserves all case and event attributes for included cases

- Setting threshold to 0 returns cases containing the activity at least once

Use this filter to identify process behaviors based on activity repetition patterns, particularly useful for rework analysis, loop detection, and identifying unusual cases requiring investigation.

---

*This documentation is part of the mindzie Studio process mining platform.*

---

## Activity Not Performed

Section: Filters

URL: https://docs.mindziestudio.com/mindzie_studio/filters/activity-not-performed

Source: /docs-master/mindzieStudio/filters/activity-not-performed/page.md

# Activity Not Performed Filter

## Overview

The Activity Not Performed filter selects cases where a specified activity was never executed. This case-level filter examines each case's complete activity sequence and returns only those cases that do not contain any events with the specified activity name. The filter is particularly useful for identifying process deviations, missing mandatory steps, or cases that followed alternative paths through the process.

## Common Uses

- **Quality Control**: Find cases that skipped review or approval activities to identify quality issues.

- **Compliance Checking**: Identify cases missing mandatory regulatory or compliance activities.

- **Exception Analysis**: Discover cases that never went through error handling or escalation activities.

- **Process Completeness**: Detect cases missing critical process steps like payment verification or delivery confirmation.

- **Variant Analysis**: Understand which cases followed alternative process paths by identifying missing standard activities.

- **Bottleneck Investigation**: Find cases that bypassed certain activities, potentially indicating workarounds or shortcuts.

## Settings

**Activity Value**: Select the activity name you want to check for absence. In the dropdown menu, you will see available activity names along with the percentage and number of cases they appeared in. The filter will return all cases where the selected activity was never performed.

> **Note**: The activity name comparison is case-sensitive and requires an exact match. If you misspell an activity name, the filter's validation system will suggest the closest matching activity.

## Examples

### Example 1: Finding Cases Without Payment

**Scenario**: You want to identify all purchase orders where the invoice was never paid, which could indicate outstanding receivables or payment issues.

**Settings**:

- Activity Value: "Pay Invoice"

**Result**: The filter removes all cases that contain the "Pay Invoice" activity, leaving only cases where payment never occurred.

**Insights**: This helps identify unpaid invoices that may require follow-up, collection efforts, or investigation into why payment was skipped.

### Example 2: Identifying Cases Without Approval

**Scenario**: You need to find all cases that proceeded without going through the approval process, which could indicate compliance violations or process gaps.

**Settings**:

- Activity Value: "Approve Request"

**Result**: The filter returns only cases that never had an "Approve Request" activity.

**Insights**: These cases may represent:

- Auto-approved low-value transactions

- Process violations where approval was skipped

- System errors that bypassed approval workflows

- Emergency expedited processing

### Example 3: Detecting Missing Quality Checks

**Scenario**: You want to find manufacturing cases that skipped the quality inspection step.

**Settings**:

- Activity Value: "Quality Inspection"

**Result**: The filter selects cases without any "Quality Inspection" events.

**Insights**: This can reveal:

- Products that may have quality issues

- Process shortcuts that could lead to defects

- Cases that need retrospective quality review

- Patterns in when inspections are skipped

### Example 4: Finding Incomplete Order Processing

**Scenario**: Identify orders where the delivery activity was never performed, indicating potential fulfillment issues.

**Settings**:

- Activity Value: "Deliver Order"

**Result**: The filter returns cases where "Deliver Order" never occurred.

**Insights**: These cases might indicate:

- Cancelled orders

- Orders stuck in processing

- Fulfillment failures requiring investigation

- Backorders or out-of-stock situations

## Output

The filter returns a new dataset containing only the cases where the specified activity was not performed. Each returned case preserves all its original events and attributes, but the specified activity will not appear in any of those cases.

If no cases match the criteria (meaning all cases contain the specified activity), the filter returns an empty result set.

## Technical Notes

- **Filter Type**: Case-level filter (removes entire cases, not individual events)

- **Performance**: Efficiently implemented with early termination logic

- **Activity Matching**: Uses exact, case-sensitive string matching

- **Null Handling**: Can find cases without null activities if ActivityValue is set to null

- **Validation**: Automatically suggests similar activity names if the specified activity is not found

---

*This documentation is part of the mindzieStudio process mining platform.*

---

## Activity Performed Once

Section: Filters

URL: https://docs.mindziestudio.com/mindzie_studio/filters/activity-performed-once

Source: /docs-master/mindzieStudio/filters/activity-performed-once/page.md

# Activity Performed Once

## Overview

The Activity Performed Once filter selects cases where a specified activity was executed exactly one time. This case-level filter examines each case's complete activity sequence and returns only those cases where the selected activity appears exactly once - not zero times, and not more than once. The filter is particularly useful for identifying cases that followed standard single-occurrence patterns, detecting anomalies where activities were repeated or skipped, and ensuring process compliance.

## Common Uses

- **Process Compliance**: Verify that critical one-time activities (like contract signing or initial approval) occur exactly once per case.

- **Quality Control**: Identify cases where unique activities are performed the expected single time, indicating proper process execution.

- **Exception Detection**: Find cases with unique activity patterns that differ from processes where activities are typically repeated or absent.

- **Data Validation**: Ensure that certain activities like case initiation or final closure are not duplicated or missing within cases.

- **Workflow Analysis**: Understand which cases follow expected single-occurrence patterns for specific process steps.

- **Audit Requirements**: Confirm that auditable events like final sign-off or completion certification happen exactly once.

## Settings

**Activity Value**: Select the activity name you want to check for single occurrence. In the dropdown menu, you will see available activity names along with frequency statistics. The filter will return all cases where the selected activity was performed exactly once.

> **Note**: The activity name comparison uses exact string matching. If you misspell an activity name, the filter's validation system will suggest the closest matching activity.

## Examples

### Example 1: Finding Properly Signed Contracts

**Scenario**: You want to identify all contract cases where the signature activity occurred exactly once, indicating proper execution without duplicates or omissions.

**Settings**:

- Activity Value: "Sign Contract"

**Result**: The filter returns only cases that contain exactly one "Sign Contract" activity. Cases with zero signatures or multiple signatures are excluded.

**Insights**: This helps identify properly executed contracts. Cases excluded by this filter may indicate process problems:

- Zero signatures suggest incomplete contracts requiring follow-up

- Multiple signatures could indicate rework or data quality issues

### Example 2: Validating Single Approval Steps

**Scenario**: You need to find cases where the final approval was granted exactly once, ensuring no duplicate approvals or missing approvals occurred.

**Settings**:

- Activity Value: "Final Approval"

**Result**: The filter selects only cases with a single "Final Approval" event.

**Insights**: These cases represent standard process flow. This analysis helps:

- Confirm proper approval workflow execution

- Identify cases requiring investigation (filtered out due to 0 or 2+ approvals)

- Validate that approval controls are functioning correctly

- Detect potential approval bypass or duplicate approval issues

### Example 3: Detecting Single Quality Inspections

**Scenario**: You want to find manufacturing cases where quality inspection was performed exactly once, as required by standard procedures.

**Settings**:

- Activity Value: "Quality Inspection"

**Result**: The filter returns cases with exactly one "Quality Inspection" event.

**Insights**: This reveals:

- Cases following standard quality procedures

- Compliance with single-inspection requirements

- Proper resource allocation (inspections not repeated unnecessarily)

- Cases excluded may need attention (no inspection or multiple re-inspections)

### Example 4: Verifying Unique Order Creation

**Scenario**: Identify orders where the order creation activity occurred exactly once, indicating clean order entry without duplicates or missing creation events.

**Settings**:

- Activity Value: "Create Order"

**Result**: The filter selects cases where "Create Order" appears exactly once.

**Insights**: These cases indicate:

- Proper order initiation process

- No duplicate order creation errors

- Clean data entry practices

- Cases filtered out may indicate system errors or data quality problems

## Output

The filter returns a new dataset containing only the cases where the specified activity was performed exactly once. Each returned case preserves all its original events and attributes.

Cases are excluded if:

- The specified activity never occurred (zero occurrences)

- The specified activity occurred more than once (two or more occurrences)

If no cases match the criteria, the filter returns an empty result set.

## Technical Notes

- **Filter Type**: Case-level filter (removes entire cases, not individual events)

- **Performance**: O(n*m) time complexity where n is number of cases and m is average events per case

- **Activity Matching**: Uses exact string matching for activity comparison

- **Null Handling**: Empty or null activity values in events are not counted

- **Validation**: Automatically suggests similar activity names if the specified activity is not found in the dataset

---

*This documentation is part of the mindzieStudio process mining platform.*

---

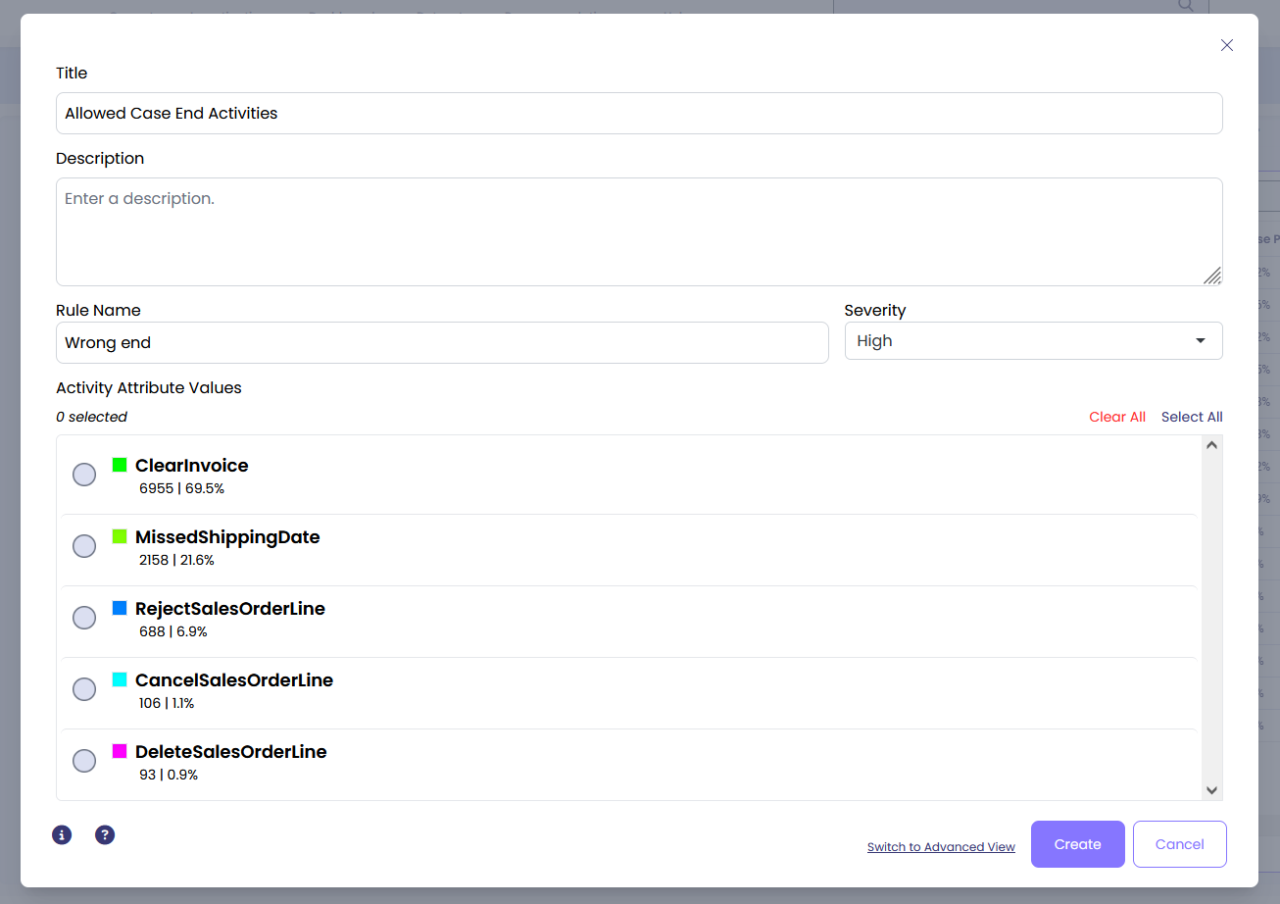

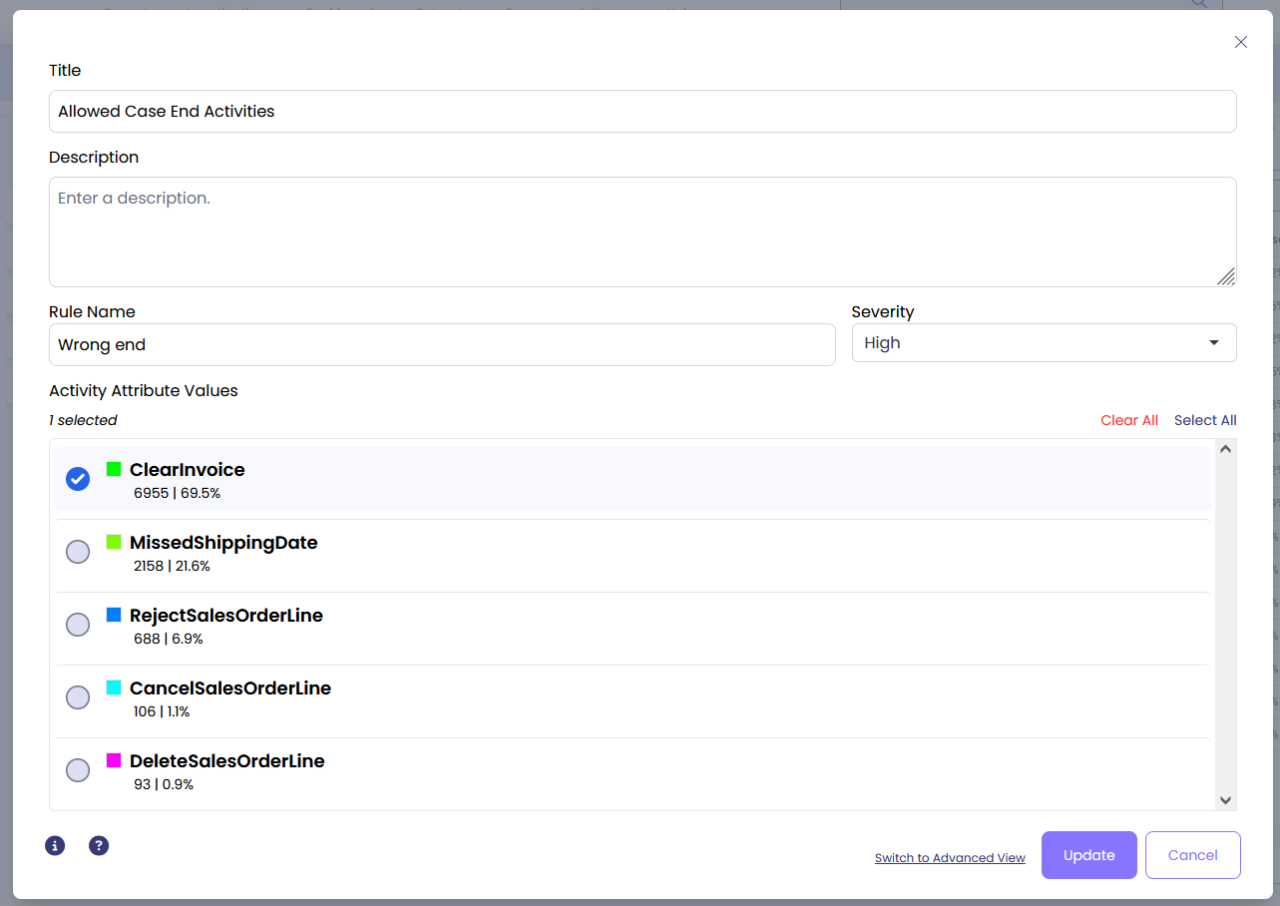

## Case End

Section: Filters

URL: https://docs.mindziestudio.com/mindzie_studio/filters/case-end

Source: /docs-master/mindzieStudio/filters/case-end/page.md

# Case End

## Overview

The Case End filter selects or removes cases based on the value of a specified attribute in the last event of each case. This filter examines what activity, resource, status, or other attribute value appears in the final event of a case, allowing you to identify cases that ended in a particular way.

## Common Uses

- Identify cases that ended with activities they should not have (e.g., cases ending with "Cancelled" instead of "Completed")

- Select cases that ended with specific purchase order statuses (e.g., "Back Ordered", "Cancelled", or "On Hold")

- Find cases where a particular resource performed the final activity

- Analyze cases that ended with specific approval states or quality check results

- Filter cases based on final customer interaction type or final payment status

- Investigate cases that concluded with error or exception activities

## Settings

**Event Column**: Select the event attribute you want to examine in the last event of each case. This can be any event-level column such as Activity Name, Resource, Status, Department, or any custom event attribute in your log.

**Column Values**: Choose one or more values from the selected column. Cases where the last event contains any of these values will be selected. The filter shows the percentage of cases that end with each value to help you understand the data distribution.

**Remove Selected Cases**: When checked, the filter inverts its behavior - cases that match the criteria are removed instead of kept. Use this when you want to exclude cases that ended with specific values.

## Examples

### Example 1: Select Cases Ending with Incomplete Activities

**Scenario**: A procurement process should always end with "Clear Invoice" activity. You want to find cases that ended with any other activity to identify incomplete processes.

**Settings**:

- Event Column: Activity Name

- Column Values: Clear Invoice

- Remove Selected Cases: Checked (to exclude cases ending with Clear Invoice)

**Result**: The filter removes 99.9% of cases that properly ended with "Clear Invoice" and keeps only the 1 case that ended with a different activity.

**Insights**: This reveals process deviations where cases did not complete the expected final step, indicating potential process quality issues or incomplete workflows.

### Example 2: Find Cases Ending with Specific Resources

**Scenario**: You want to analyze cases where either Resource 48 or Resource 17 performed the final activity to understand their workload patterns or identify bottlenecks.

**Settings**:

- Event Column: Resource

- Column Values: Resource 48, Resource 17

- Remove Selected Cases: Unchecked

**Result**: The filter selects only cases where the last activity was performed by either Resource 48 or Resource 17.

**Insights**: This helps identify which cases these specific resources are responsible for completing, useful for workload analysis, quality control, or understanding resource specialization in your process.

### Example 3: Analyze Cases with Back Order Status

**Scenario**: In a purchase order process, you want to identify cases that ended with a "Back Ordered" status to understand supply chain issues.

**Settings**:

- Event Column: Order Status

- Column Values: Back Ordered

- Remove Selected Cases: Unchecked

**Result**: The filter selects all cases where the final status was "Back Ordered".

**Insights**: This reveals potential supply chain problems and allows you to analyze common characteristics of back-ordered cases, such as specific vendors, products, or time periods where back orders are more common.

### Example 4: Exclude Cancelled Cases

**Scenario**: You want to analyze only successfully completed cases by removing those that ended with "Cancelled" or "Rejected" statuses.

**Settings**:

- Event Column: Case Status

- Column Values: Cancelled, Rejected

- Remove Selected Cases: Checked

**Result**: The filter removes all cases that ended with "Cancelled" or "Rejected" statuses, leaving only cases that reached other conclusion states.

**Insights**: This creates a clean dataset for analyzing successful process execution patterns without the noise of cancelled or rejected cases.

## Output

The filter operates at the case level, meaning it removes or keeps entire cases based on the criteria. After applying the filter:

- Cases where the last event matches any of the selected values will be kept (or removed if "Remove Selected Cases" is checked)

- All other cases will be removed (or kept if "Remove Selected Cases" is checked)

- The percentage of cases removed and kept is displayed in the filter output

- Subsequent analysis will only include the filtered cases

**Important Notes**:

- The filter examines only the final event in each case, not all events

- Multiple values can be selected, and cases matching any of the values will be included

- The filter treats values as exact matches - they must match precisely

- Null or empty values in the selected column are ignored and will not match any criteria

---

*This documentation is part of the mindzie Studio process mining platform.*

---

## Case Start

Section: Filters

URL: https://docs.mindziestudio.com/mindzie_studio/filters/case-start

Source: /docs-master/mindzieStudio/filters/case-start/page.md

# Case Start

## Overview

The Case Start filter selects or removes cases based on the value of a specified attribute in the first event of each case. This filter examines the starting point of each case and matches against one or more specific values you define.

This is a case-level filter, which means it evaluates entire cases and either keeps or removes them based on how each case begins. It's particularly useful when you need to analyze processes that started in a particular way, or exclude cases that began with specific conditions.

## Common Uses

- **Analyze cases by initial activity**: Select cases that started with specific activities, such as "Order Received" or "Request Submitted", to understand process variations based on how cases begin.

- **Filter by entry point resource**: Identify cases that were initiated by particular teams, departments, or individuals to analyze performance patterns and workload distribution at the process entry point.

- **Focus on specific order types**: Select cases that began with particular order types, customer segments, or priority levels to perform targeted analysis on specific business scenarios.

- **Exclude problematic starts**: Remove cases that started with known problematic activities or conditions, allowing you to focus your analysis on normally-initiated cases.

- **Compare different process entry points**: Analyze how cases that start with different activities behave differently throughout the process lifecycle.

- **Identify entry channel patterns**: Select cases by their initial channel (web, phone, email, in-person) to compare customer journey patterns across different origination points.

## Settings

**Activity Attribute**: Select the event attribute to examine in the first event of each case. Common choices include Activity Name, Resource, or any custom event attribute that exists in your event log. This determines what aspect of the starting event you want to match against.

**Attribute Values**: Choose one or more specific values from the selected attribute that should match the first event. Cases that begin with any of these values will be selected (or removed if the Remove option is enabled). The dropdown shows available values along with their frequency as starting events.

**Remove Selected Cases**: Check this box to invert the filter behavior. When enabled, cases that match your criteria will be removed instead of kept, allowing you to focus on cases that did NOT start with the specified values.

## Examples

### Example 1: Cases Starting with Specific Activity

**Scenario**: You want to analyze only those purchase order cases that started with the activity "Order Received" to understand the standard process flow, excluding cases that began differently (such as expedited orders or returns).

**Settings**:

- Activity Attribute: Activity Name

- Attribute Values: Order Received

- Remove Selected Cases: Unchecked

**Result**: The filter keeps only cases where the first event has Activity Name = "Order Received". If you had 10,000 total cases and 8,500 started with "Order Received", you would now have 8,500 cases (85% of your original data).

**Insights**: By focusing on standard-entry cases, you can analyze the typical process flow without the complexity introduced by alternative starting points. This is useful for establishing baseline performance metrics and identifying the most common process variant.

### Example 2: Excluding Cases by Starting Resource

**Scenario**: You've discovered that cases started by a specific automated system (Resource: "AutoImport_Bot") have incomplete data in early stages. You want to remove these cases to focus on manually-initiated cases that have complete information.

**Settings**:

- Activity Attribute: Resource

- Attribute Values: AutoImport_Bot

- Remove Selected Cases: Checked

**Result**: All cases that started with the resource "AutoImport_Bot" are removed from your analysis. If 1,200 of your 10,000 cases (12%) were started by this bot, you now have 8,800 cases remaining.

**Insights**: By removing the auto-imported cases, you ensure your analysis focuses on cases with complete early-stage data. This prevents skewed metrics caused by incomplete or differently-structured automated entries and provides more accurate insights into the human-initiated process flow.

### Example 3: Multiple Starting Activities

**Scenario**: Your customer service process has three valid entry points: "Phone Call Received", "Email Received", and "Chat Started". You want to analyze only these standard entries and exclude any cases that started differently (such as escalations or transfers from other departments).

**Settings**:

- Activity Attribute: Activity Name

- Attribute Values: Phone Call Received, Email Received, Chat Started (select multiple)

- Remove Selected Cases: Unchecked

**Result**: Only cases that began with one of these three activities are kept. If 9,500 of your 10,000 cases started through these channels, you now have 9,500 cases, excluding the 500 cases that started through non-standard entry points.

**Insights**: This allows you to focus your analysis on the standard customer service journey while excluding edge cases like internal escalations or transferred cases that don't follow the normal process pattern. You can now establish accurate benchmarks for standard case handling.

### Example 4: Analyzing High-Priority Order Starts

**Scenario**: You want to specifically analyze how high-priority orders are processed differently from regular orders. Your event log has a custom attribute "Priority" that is set in the first event.

**Settings**:

- Activity Attribute: Priority (custom event attribute)

- Attribute Values: High, Urgent

- Remove Selected Cases: Unchecked

**Result**: Only cases that started with Priority = "High" or Priority = "Urgent" are kept for analysis. If 2,500 of your 10,000 cases (25%) were high-priority, you now have 2,500 cases to analyze.

**Insights**: By isolating high-priority cases from the start, you can measure their end-to-end performance separately, identify if they receive preferential treatment, calculate their true cycle times, and ensure that SLAs for urgent orders are being met throughout the entire process.

## Output

The Case Start filter produces a refined event log containing only the cases that match (or don't match, if Remove is enabled) your specified starting criteria. Each case in the output has a first event that matches one of your selected attribute values.

**What gets filtered**: Entire cases are either included or excluded based on their first event. If a case doesn't start with one of your specified values, all events from that case are removed from the analysis.

**What stays unchanged**: The events within each kept case remain exactly as they were - this filter doesn't modify event details, timing, or sequence. It simply performs a binary decision at the case level.

**Percentage information**: When configuring the filter, mindzieStudio displays the percentage of cases that start with each available value, helping you understand the impact of your selection before applying the filter.

---

*This documentation is part of the mindzieStudio process mining platform.*

---

## Cases With Attribute

Section: Filters

URL: https://docs.mindziestudio.com/mindzie_studio/filters/cases-with-attribute

Source: /docs-master/mindzieStudio/filters/cases-with-attribute/page.md

# Cases with Attribute

## Overview

The Cases with Attribute filter selects or removes cases based on attribute values at either the case level or event level. This versatile filter supports comprehensive comparison operations including exact matching, text pattern matching, numerical comparisons, date operations, and multi-value selections.

The filter automatically detects whether the specified attribute exists in the case data or event data and applies the appropriate filtering logic. When filtering on event attributes, entire cases are included or excluded based on whether they contain events matching the criteria - the filter does not remove individual events.

## Common Uses

**Include cases where:**

- All cases from a specific region or department

- Cases with total order value exceeding a threshold

- Cases containing a specific activity or resource

- Cases started or completed within a date range

- Cases where a boolean flag is true (e.g., "Expedited" or "Cancelled")

- Cases with customer type matching one of several categories

**Exclude cases where:**

- Cases from test accounts or inactive vendors

- Cases with amounts below minimum processing thresholds

- Cases that don't contain required activities

- Cases outside your analysis timeframe

- Cases marked as cancelled or invalid

- Cases from specific organizational units being reorganized

## Settings

**Attribute:** Select the attribute you want to filter on from the dropdown menu. This can be any case-level attribute (like Region, Customer, Total Amount) or event-level attribute (like Activity Name, Resource, Event Status). The filter automatically determines whether the attribute exists at the case or event level.

**Comparison Method:** Choose how to compare attribute values against your criteria. Available comparison methods depend on the attribute's data type:

- **Text attributes:** Equal, Not Equal, Begins With, Ends With, Contains, Is One Of

- **Numeric attributes:** Equal, Not Equal, Greater Than, Greater Than or Equal, Less Than, Less Than or Equal, Between

- **Date attributes:** Day Equal, Day Greater Than, Day Greater Than or Equal, Day Less Than, Day Less Than or Equal, Between

- **Boolean attributes:** Equal (True/False)

**Compare Value:** Enter the value to compare against when using single-value comparisons (Equal, Not Equal, Greater Than, etc.). For text comparisons, matching is case-insensitive.

**Compare Values (Is One Of):** When using "Is One Of" comparison, select multiple values from the list or enter multiple values. Cases matching any of the specified values will be included.

**Range Values (Between):** For Between comparisons, specify both the lower and upper bounds. The comparison is inclusive - cases with values equal to either bound are included.

**Activity Filter (Event Attributes Only):** When filtering on event-level attributes, you can optionally specify an activity name to limit the evaluation to events from that specific activity. If left blank, all events are considered regardless of their activity.

**Remove Selected Cases:** Check this box to invert the filter logic - instead of including cases that match the criteria, the filter will exclude them. This is useful for removing unwanted cases from your analysis.

## Examples

### Example 1: Filter by Region (Text Exact Match)

**Scenario:** You want to analyze only cases from the European region to compare performance against other regions.

**Settings:**

- Attribute: Region

- Comparison Method: Equal

- Compare Value: "Europe"

- Remove Selected Cases: Unchecked

**Result:**

- Cases Included: All cases where Region = "Europe"

- Cases Excluded: All cases from other regions (North America, Asia, etc.)

**Insights:** This creates a focused dataset for regional analysis, allowing you to identify region-specific patterns and compare metrics against other regions.

### Example 2: Filter High-Value Orders (Numeric Comparison)

**Scenario:** You want to focus on high-value purchase orders exceeding $50,000 to analyze approval patterns and processing times.

**Settings:**

- Attribute: Total Order Amount

- Comparison Method: Greater Than

- Compare Value: 50000

- Remove Selected Cases: Unchecked

**Result:**

- Cases Included: All cases where Total Order Amount > $50,000

- Cases Excluded: All cases with amounts of $50,000 or less

**Insights:** Isolating high-value transactions helps identify whether approval bottlenecks or compliance issues are specific to large orders, enabling targeted process improvements.

### Example 3: Filter by Multiple Vendor Categories (Multi-Value Selection)

**Scenario:** You need to analyze cases from preferred vendors in categories A, B, and C while excluding other vendor categories.

**Settings:**

- Attribute: Vendor Category

- Comparison Method: Is One Of

- Compare Values: ["Category A", "Category B", "Category C"]

- Remove Selected Cases: Unchecked

**Result:**

- Cases Included: All cases where Vendor Category is A, B, or C

- Cases Excluded: All cases from other vendor categories

**Insights:** This allows focused analysis on preferred vendor performance while maintaining a sufficient case volume for meaningful insights across multiple related categories.

### Example 4: Find Cases with Specific Activity (Event Attribute)

**Scenario:** You want to find all cases that went through manual approval to understand how often this exception path occurs.

**Settings:**

- Attribute: Activity Name

- Comparison Method: Equal

- Compare Value: "Manual Approval"

- Remove Selected Cases: Unchecked

**Result:**

- Cases Included: All cases containing at least one "Manual Approval" event

- Cases Excluded: All cases that never had manual approval

**Insights:** Identifying cases requiring manual approval helps quantify automation rates and understand which types of cases require human intervention.

### Example 5: Filter Recent Cases (Date Range)

**Scenario:** You want to analyze only cases that started in the last quarter for a current performance assessment.

**Settings:**

- Attribute: Case Start Date

- Comparison Method: Between

- Lower Value: 2024-07-01

- Upper Value: 2024-09-30

- Remove Selected Cases: Unchecked

**Result:**

- Cases Included: All cases with start dates from July 1 through September 30, 2024

- Cases Excluded: All cases started before July 1 or after September 30, 2024

**Insights:** Time-based filtering ensures your analysis reflects current process performance rather than historical patterns that may no longer be relevant.

### Example 6: Exclude Test Accounts (Inverse Filter)

**Scenario:** Your dataset includes test cases that should not be part of operational analysis. You want to remove all cases where the "Test Account" flag is true.

**Settings:**

- Attribute: Is Test Account

- Comparison Method: Equal

- Compare Value: True

- Remove Selected Cases: Checked

**Result:**

- Cases Included: All cases where Is Test Account = False or Null

- Cases Excluded: All cases where Is Test Account = True

**Insights:** Removing test data ensures your metrics and analysis reflect actual operational performance and aren't skewed by testing activities.

## Output

The filter modifies the case selection in your current analysis view. The case count indicator at the top of the screen updates to show how many cases remain after filtering. All subsequent calculators, visualizations, and analysis tools will operate only on the filtered case set.

The filter operates at the case level - even when filtering on event attributes, entire cases are included or excluded based on whether they contain matching events. Individual events are not removed from cases.

When multiple filters are applied, they work together using AND logic - a case must pass all filter criteria to be included in the analysis.

---

*This documentation is part of the mindzie Studio process mining platform.*

---

## Cases With Changed Attribute

Section: Filters

URL: https://docs.mindziestudio.com/mindzie_studio/filters/cases-with-changed-attribute

Source: /docs-master/mindzieStudio/filters/cases-with-changed-attribute/page.md

# Cases with Changed Attribute

## Overview

The Cases with Changed Attribute filter selects cases where an event attribute has different values across events within the case. This case-level filter examines all events in each case and keeps only those cases where the specified attribute varies from event to event. The filter is particularly useful for identifying dynamic processes where attribute values change during case execution, such as status transitions, location changes, or resource handoffs.

The filter focuses on non-null values - it filters out null values first, then checks if the remaining values are identical. Cases where all events have the same value (or all null values) are excluded from the results.

## Common Uses

- **Status Progression Analysis**: Identify cases where status values changed during processing, indicating progression through different stages.

- **Resource Handoff Detection**: Find cases where different resources or departments handled different events, revealing collaboration patterns.

- **Location Change Tracking**: Discover cases where the location attribute changed, indicating physical movement or transfer between sites.

- **Priority Escalation**: Detect cases where priority levels changed during execution, showing escalation or de-escalation patterns.

- **Process Deviation Identification**: Find cases where attribute values varied unexpectedly, potentially indicating exceptions or non-standard processing.

- **Multi-Stage Process Analysis**: Identify cases that went through multiple stages by detecting changes in stage-related attributes.

## Settings

**Event Column Name**: Select the event attribute you want to evaluate for variation. The filter will return cases where this attribute has different values across events. Only non-null values are considered when checking for variation.

> **Note**: The attribute must exist in the event table and be of a supported data type (String, Int32, Int64, DateTime, TimeSpan, Single, Double, or Boolean). If you mistype the column name, the filter's validation system will suggest similar column names.

## Examples

### Example 1: Finding Cases with Status Changes

**Scenario**: You want to identify all order processing cases where the order status changed during processing, indicating that the order progressed through different stages.

**Settings**:

- Event Column Name: "Order Status"

**Result**: The filter returns cases where different events have different "Order Status" values (e.g., "New" -> "Processing" -> "Shipped"). Cases where all events have the same status are excluded.

**Insights**: This helps identify:

- Cases that successfully progressed through the workflow

- Normal processing patterns with status transitions

- Cases that may have experienced multiple status changes

- Orders that moved through different fulfillment stages

### Example 2: Detecting Resource Handoffs

**Scenario**: You need to find cases where different resources or employees worked on different activities, indicating collaboration or handoff situations.

**Settings**:

- Event Column Name: "Resource"

**Result**: The filter selects cases where the "Resource" attribute varies across events, meaning multiple people or systems handled different activities.

**Insights**: These cases reveal:

- Collaborative work patterns where multiple people contribute

- Handoffs between departments or teams

- Cases requiring specialized expertise from different resources

- Potential bottlenecks where resource changes occurred

### Example 3: Identifying Location Changes

**Scenario**: You want to track shipments or items that moved between different locations during processing.

**Settings**:

- Event Column Name: "Location"

**Result**: The filter returns cases where events occurred at different locations, indicating physical movement or transfer.

**Insights**: This can reveal:

- Items that traveled through multiple warehouses or distribution centers

- Cross-site processing patterns

- Geographic routing of cases

- Cases requiring multi-location coordination

### Example 4: Finding Priority Escalations

**Scenario**: Identify support tickets or requests where the priority level changed during handling, indicating escalation or de-escalation.

**Settings**:

- Event Column Name: "Priority"

**Result**: The filter selects cases where "Priority" values changed between events (e.g., from "Low" to "High").

**Insights**: These cases might indicate:

- Escalated issues requiring increased attention

- De-escalated cases after initial assessment

- Dynamic priority adjustments based on customer feedback

- Cases requiring management intervention

### Example 5: Detecting Department Transfers

**Scenario**: You want to find cases that were transferred between departments during processing, indicating complex cases requiring cross-functional support.

**Settings**:

- Event Column Name: "Department"

**Result**: The filter returns cases where different events were handled by different departments.

**Insights**: This helps identify:

- Cases requiring expertise from multiple departments

- Cross-functional collaboration patterns

- Potential handoff delays between departments

- Complex cases that could benefit from process optimization

### Example 6: Tracking Approval Level Changes

**Scenario**: Identify cases where the approval level changed, such as requests that required escalation to higher management levels.

**Settings**:

- Event Column Name: "Approval Level"

**Result**: The filter selects cases where the "Approval Level" attribute varied across events.

**Insights**: These cases may represent:

- Requests that required multiple approval tiers

- Escalation patterns for high-value or complex requests

- Cases that exceeded standard approval thresholds

- Multi-stage approval workflows

## Output

The filter returns a new dataset containing only the cases where the specified event attribute has different values across events. Each returned case preserves all its original events and attributes, and you will see variation in the selected attribute's values.

Cases where all non-null values are identical are excluded. Cases with only null values or a single unique value are also excluded.

If no cases match the criteria, the filter returns an empty result set.

## Technical Notes

- **Filter Type**: Case-level filter (removes entire cases, not individual events)

- **Null Handling**: Ignores null values when checking for variation - only considers non-null values

- **Variation Detection**: Compares the first non-null value against all other non-null values

- **Supported Data Types**: String, Int32, Int64, DateTime, TimeSpan, Single, Double, Boolean

- **Validation**: Automatically suggests similar column names if the specified column is not found

---

*This documentation is part of the mindzieStudio process mining platform.*

---

## Cases With Identical Event Dates

Section: Filters

URL: https://docs.mindziestudio.com/mindzie_studio/filters/cases-with-identical-event-dates

Source: /docs-master/mindzieStudio/filters/cases-with-identical-event-dates/page.md

# Cases with Identical Event Dates

## Overview

The Cases with Identical Event Dates filter identifies cases where multiple activities occurred on the same calendar day, regardless of the specific time. This filter helps you analyze temporal clustering patterns in your processes, identify intensive work periods, or distinguish between cases with concentrated activity versus those spread across multiple days. Unlike the timestamp filter, this filter compares only the calendar date, ignoring the time of day.

## Common Uses

- Identify cases with concentrated daily activity indicating intensive processing

- Find batch processing patterns where multiple steps occur on the same day

- Analyze work intensity by identifying cases with same-day activity clustering

- Separate rush-processed cases from normal multi-day cases

- Detect cases where multiple events were completed in a single work session

- Compare processing patterns between concentrated and distributed workflows

## Settings

**Include or Exclude Cases:** Choose whether to include cases that have same-day activities or exclude them.

- **Include cases with same-day activities:** Returns only cases where at least two events occurred on the same calendar day

- **Exclude cases with same-day activities:** Returns only cases where all events occurred on different calendar days

## Examples

### Example 1: Identifying Express Orders

**Scenario:** Your order fulfillment process normally spans multiple days (Order Received on Day 1, Processing on Day 2, Shipped on Day 3). However, express orders are rushed through the entire workflow in a single day. You want to identify these express cases for performance analysis.

**Settings:**

- Include cases with same-day activities

**Result:**

The filter returns all cases where multiple fulfillment steps occurred on the same calendar day. For example, Case #EXP-1234 shows "Order Received" at 9:00 AM, "Payment Processed" at 9:15 AM, "Picked" at 10:30 AM, and "Shipped" at 2:00 PM, all on October 15, 2024. If 300 out of 5,000 orders were processed same-day, those 300 cases are returned.

**Insights:** These cases represent your express or rush processing workflow, which operates differently from standard multi-day fulfillment. By analyzing these separately, you can measure express service performance, identify bottlenecks in rush processing, and calculate the true capacity of same-day fulfillment.

### Example 2: Analyzing Normal Multi-Day Workflows

**Scenario:** You want to analyze your standard loan approval process, which typically spans several days with proper review periods. You need to exclude rush cases where multiple steps were completed on the same day, focusing only on cases with proper daily distribution.

**Settings:**

- Exclude cases with same-day activities

**Result:**

The filter returns only cases where all activities occurred on different calendar days. For example, Case #LOAN-5678 shows "Application Submitted" on Oct 10, "Document Review" on Oct 11, "Credit Check" on Oct 12, and "Final Approval" on Oct 13. If 4,500 out of 5,000 loans followed the normal multi-day pattern, those 4,500 cases are returned.

**Insights:** By excluding same-day cases, you can analyze your standard workflow without noise from expedited processing. This provides accurate insights into normal processing times, proper review periods, and typical bottlenecks when cases progress through your intended multi-day workflow.

### Example 3: Detecting Bulk Processing Days

**Scenario:** Your invoice processing system normally handles invoices individually across multiple days. However, at month-end, accounting staff often bulk-process multiple steps for many invoices on the same day. You want to identify cases processed during these intensive bulk sessions.

**Settings:**

- Include cases with same-day activities

**Result:**

The filter identifies cases where multiple processing steps (Invoice Received, Validation, Approval, Payment Scheduled) occurred on the same calendar day. For example, during month-end on October 31, 150 invoices show all steps completed on that single day, while throughout the rest of October, only 20 invoices had same-day processing. Those 170 cases with same-day activity are returned.

**Insights:** This reveals your bulk processing patterns and helps distinguish between normal daily processing and intensive batch sessions. You can analyze these patterns separately, optimize bulk processing workflows, and understand the impact of concentrated processing on quality and accuracy.

### Example 4: Measuring Patient Journey Duration

**Scenario:** Your healthcare process tracks patient journeys through Emergency Department visits. You want to identify cases where the entire visit (Triage, Examination, Treatment, Discharge) occurred within a single calendar day versus cases requiring overnight stays or multi-day care.

**Settings:**

- Include cases with same-day activities

**Result:**

The filter returns all ED visits where all activities happened on the same calendar day. For example, Patient #12345 was triaged at 2:00 PM, examined at 2:30 PM, treated at 3:15 PM, and discharged at 4:45 PM, all on October 15. If 2,800 out of 3,000 ED visits were same-day, those 2,800 cases are returned.

**Insights:** Most ED visits should be same-day cases, so this helps you identify the 200 cases that required multi-day care or overnight observation. By analyzing each group separately, you can understand the characteristics of same-day versus extended care cases and optimize resource allocation accordingly.

## Output

This filter operates at the case level and filters entire cases based on calendar date analysis:

- **Include mode:** Returns only cases containing at least two events on the same calendar day

- **Exclude mode:** Returns only cases where all events occurred on different calendar days

- Comparison uses calendar dates only (ignores time of day)

- Case and event attributes are preserved

- Event sequences and all other properties remain unchanged

- More lenient than timestamp comparison (events at different times on the same day count as same-day)

Use this filter to analyze temporal clustering patterns, identify concentrated versus distributed workflows, and separate rush processing from normal multi-day cases.

---

*This documentation is part of the mindzie Studio process mining platform.*

---

## Cases With Identical Event Timestamps

Section: Filters

URL: https://docs.mindziestudio.com/mindzie_studio/filters/cases-with-identical-event-timestamps

Source: /docs-master/mindzieStudio/filters/cases-with-identical-event-timestamps/page.md

# Cases with Identical Event Timestamps

## Overview

The Cases with Identical Event Timestamps filter identifies cases where multiple activities occurred at exactly the same timestamp, down to the millisecond. This filter is valuable for detecting data quality issues, identifying simultaneous process execution, or finding cases where events were logged in bulk with identical timestamps. You can choose to include cases with same-time activities or exclude them, depending on whether you're investigating timestamp anomalies or focusing on properly sequenced cases.

## Common Uses

- Detect data quality issues where multiple events have identical timestamps

- Identify cases with suspicious timestamp patterns that may indicate data loading errors

- Find cases where parallel activities were executed simultaneously

- Exclude cases with timestamp anomalies from process analysis

- Investigate batch processing or bulk data loading scenarios

- Clean datasets by focusing only on cases with properly sequenced timestamps

## Settings

**Include or Exclude Cases:** Choose whether to include cases that have same-time activities or exclude them.

- **Include cases with same-time activities:** Returns only cases where at least two events occurred at exactly the same timestamp

- **Exclude cases with same-time activities:** Returns only cases where all events have different timestamps (properly sequenced)

## Examples

### Example 1: Finding Data Quality Issues

**Scenario:** Your process mining dataset was imported from a legacy system. You suspect that some cases have data quality issues where multiple events were logged with identical timestamps, which shouldn't happen in your sequential approval workflow.

**Settings:**

- Include cases with same-time activities

**Result:**

The filter returns all cases where two or more events share exactly the same timestamp. For example, if Case #12345 has "Submit Request" and "Manager Approval" both timestamped at 2024-10-15 14:32:18.450, this case would be included in the results. If you had 5,000 cases with 120 showing timestamp anomalies, those 120 cases are returned.

**Insights:** These cases likely represent data quality issues that need investigation. Events in a sequential approval workflow shouldn't occur at the exact same millisecond. This could indicate bulk data loading, system clock issues, or improper event logging. Review these cases with your data team to determine the root cause.

### Example 2: Analyzing Clean Sequential Cases

**Scenario:** You want to perform accurate process variant analysis and need to exclude cases with timestamp anomalies. Your goal is to analyze only cases where events occurred at distinct times, ensuring proper sequential ordering.

**Settings:**

- Exclude cases with same-time activities

**Result:**

The filter returns only cases where all events have unique timestamps. If you had 5,000 cases with 120 showing timestamp collisions, the filter returns the remaining 4,880 cases where all events are properly sequenced in time. Each case in the result has events with distinct timestamps.

**Insights:** By excluding cases with identical timestamps, you ensure your variant analysis is based on properly sequenced data. This provides more accurate cycle times, bottleneck identification, and variant frequencies since all events have clear temporal ordering.

### Example 3: Investigating Bulk Processing

**Scenario:** Your warehouse management system processed a large batch of shipments overnight. You want to identify which cases were part of the bulk processing where multiple activities (Pick, Pack, Label) might have been logged simultaneously.

**Settings:**

- Include cases with same-time activities

**Result:**

The filter identifies cases where multiple warehouse activities share the same timestamp. For example, Case #WH-7890 might show "Pick Items," "Pack Box," and "Generate Label" all timestamped at 2024-10-15 03:15:22.000, indicating bulk processing. If 200 shipments were processed in the batch, those 200 cases would be returned.

**Insights:** These cases represent bulk processing events where multiple steps were completed and logged simultaneously rather than individually. This helps you separate bulk-processed cases from normal sequential cases, allowing different analysis approaches for each processing mode.

### Example 4: Validating Real-Time Transaction Logging

**Scenario:** Your financial transaction system should log each step (Transaction Initiated, Validation, Authorization, Completion) with precise timestamps. You want to verify that your real-time logging is working correctly by finding any cases with timestamp collisions.

**Settings:**

- Include cases with same-time activities

**Result:**

The filter returns cases where two or more transaction steps have identical timestamps. Ideally, this should return zero cases in a properly functioning real-time system. If you find 15 cases out of 50,000 with timestamp collisions, these warrant investigation.

**Insights:** Cases with identical timestamps in a real-time transaction system indicate potential issues with event logging or system clock resolution. A small number might be acceptable, but a large number suggests systematic problems with your timestamp capture mechanism that should be addressed.

## Output

This filter operates at the case level and filters entire cases based on timestamp analysis:

- **Include mode:** Returns only cases containing at least two events with identical timestamps

- **Exclude mode:** Returns only cases where all events have unique timestamps

- Case and event attributes are preserved

- Event sequences and all other properties remain unchanged

- The filter performs exact timestamp comparison (including milliseconds)

Use this filter to identify data quality issues or to ensure your analysis uses only properly sequenced cases with accurate temporal ordering.

---

*This documentation is part of the mindzie Studio process mining platform.*

---

## Cases With Unchanged Attribute

Section: Filters

URL: https://docs.mindziestudio.com/mindzie_studio/filters/cases-with-unchanged-attribute

Source: /docs-master/mindzieStudio/filters/cases-with-unchanged-attribute/page.md

# Cases with Unchanged Attribute

## Overview

The Cases with Unchanged Attribute filter selects cases where a specified attribute maintains the same value throughout the entire case. This case-level filter examines all events within each case and returns only those cases where every event has an identical value in the selected attribute. This filter is particularly useful for identifying processes where certain properties remain constant, detecting data quality issues, or finding cases that follow standardized patterns without variation.

## Common Uses

- **Data Quality Validation**: Identify cases where attributes that should change (like status or location) remain static throughout the process, potentially indicating data recording issues.

- **Process Standardization**: Find cases that were handled by a single department, resource, or system without handoffs or transfers.

- **Consistency Analysis**: Detect cases where key attributes like priority level, customer type, or product category remained unchanged from start to finish.

- **Single-Location Processing**: Identify cases processed entirely at one location or facility without transfers between sites.

- **Dedicated Resource Analysis**: Find cases handled by a single resource or team member throughout the entire process.

- **Static Configuration Detection**: Discover cases where configuration values or system settings remained constant, indicating stable processing conditions.

## Settings

**Event Attribute Name**: Select the event attribute you want to check for consistency. The filter will return all cases where every event has the same value in this attribute. The attribute must exist in your event table and be of a supported data type (String, Integer, DateTime, TimeSpan, Decimal, Boolean).

> **Note**: The filter uses exact value matching. For numeric and date attributes, values must be identical. For string attributes, the comparison is case-sensitive. Null values are treated as valid - if all events have null values in the selected attribute, the case will be included in the results.

## Examples

### Example 1: Finding Single-Department Cases

**Scenario**: You want to identify purchase orders that were processed entirely within a single department without any inter-departmental handoffs.

**Settings**:

- Event Attribute Name: "Department"

**Result**: The filter returns only cases where every event has the same department value, such as all events having "Finance" or all events having "Procurement".

**Insights**: These cases represent streamlined processes without departmental transfers. This can help identify:

- Departments with end-to-end process ownership

- Cases that avoided coordination overhead

- Potential best practices for process efficiency

- Simpler cases that didn't require cross-functional collaboration

### Example 2: Detecting Unchanging Priority Levels

**Scenario**: You need to find cases where the priority level never changed from start to finish, which could indicate either straightforward processing or a failure to escalate urgent issues.

**Settings**:

- Event Attribute Name: "Priority"

**Result**: The filter selects cases where all events have the same priority value (e.g., all "Low", all "Medium", or all "High").

**Insights**: This reveals:

- Cases that maintained their initial priority classification

- Potential issues where urgent cases weren't escalated

- Standard processing patterns for different priority levels

- Opportunities to implement dynamic priority adjustment

### Example 3: Identifying Static Status Cases

**Scenario**: Find cases where a status attribute never changed, which could indicate data quality problems or incomplete process execution.

**Settings**:

- Event Attribute Name: "Status"

**Result**: The filter returns cases where the status field has the same value in all events.

**Insights**: These cases may represent:

- Data recording errors where status updates weren't logged

- Cancelled or abandoned cases that never progressed

- System integration issues preventing status updates

- Cases requiring data quality remediation

### Example 4: Single-Location Processing

**Scenario**: Identify manufacturing orders that were completed entirely at one production facility without transfers to other locations.

**Settings**:

- Event Attribute Name: "Location"

**Result**: The filter selects cases where all events occurred at the same location.

**Insights**: This can reveal:

- Products manufactured at a single facility from start to finish

- Cases that avoided logistics complexity

- Location-specific capabilities and expertise

- Opportunities for centralized processing models

### Example 5: Consistent Resource Allocation

**Scenario**: Find cases where the same resource handled all activities, indicating dedicated case ownership.

**Settings**:

- Event Attribute Name: "Resource"

**Result**: The filter returns cases where every event was performed by the same resource or person.

**Insights**: These cases show:

- End-to-end case ownership by individual resources

- Potential efficiency gains from avoiding handoffs

- Resource specialization patterns

- Training opportunities for dedicated case handling

### Example 6: Uniform Customer Type Processing

**Scenario**: Identify cases where the customer type classification remained constant throughout processing.

**Settings**:

- Event Attribute Name: "CustomerType"

**Result**: The filter selects cases where all events have the same customer type value (e.g., all "Premium", all "Standard", or all "Enterprise").

**Insights**: This helps understand:

- Customer segments with stable classifications

- Processes tailored to specific customer types

- Consistency in customer categorization

- Patterns in how different customer types are handled

## Output

The filter returns a new dataset containing only the cases where all events have identical values in the specified attribute. Each returned case preserves all its original events and attributes. If a case contains any variation in the selected attribute's value across its events, that case is excluded from the results.

If no cases match the criteria (meaning all cases have at least one event with a different value), the filter returns an empty result set.

## Technical Notes

- **Filter Type**: Case-level filter (removes entire cases, not individual events)

- **Comparison Logic**: Uses the first event's value as a reference and compares all subsequent events to it

- **Null Handling**: Treats null values as valid and consistent - cases where all events have null values are included

- **Supported Data Types**: String, Int32, Int64, DateTime, TimeSpan, Single, Double, Boolean

- **Performance**: Efficiently implemented using LINQ with early termination when a mismatch is found

---

*This documentation is part of the mindzieStudio process mining platform.*

---

## Compare Attribute Values

Section: Filters

URL: https://docs.mindziestudio.com/mindzie_studio/filters/compare-attribute-values

Source: /docs-master/mindzieStudio/filters/compare-attribute-values/page.md

# Compare Attribute Values

## Overview

The Compare Attribute Values filter validates mathematical calculations by comparing the result of a computed operation against a stored result value. This filter performs arithmetic operations (addition, subtraction, multiplication, division) on two attributes and compares the calculated result with a third attribute containing the expected result. It can filter cases based on whether the calculation matches, differs from, or produces null results compared to the stored values.

The filter supports both case-level and event-level filtering depending on the source of the attributes. It includes tolerance handling for floating-point precision issues through a configurable threshold, making it ideal for data quality validation and anomaly detection in business processes.

## Common Uses

- **Data Quality Validation**: Verify that calculated totals match stored totals in financial data, identifying potential data entry errors or system calculation issues.

- **Invoice Verification**: Check that line item amounts (quantity * price) match the stored total amount, flagging invoices with calculation discrepancies.

- **Reconciliation Analysis**: Find cases where expected calculations don't match actual values, such as tax calculations, discount applications, or currency conversions.

- **Fraud Detection**: Identify suspicious transactions where calculated values don't align with recorded values, potentially indicating manual manipulation or system errors.

- **Process Integrity Checks**: Ensure that derived values in your process data are mathematically consistent with their source values.

- **System Migration Validation**: Verify data integrity after system migrations by checking that calculated fields remained consistent with their source values.

## Settings